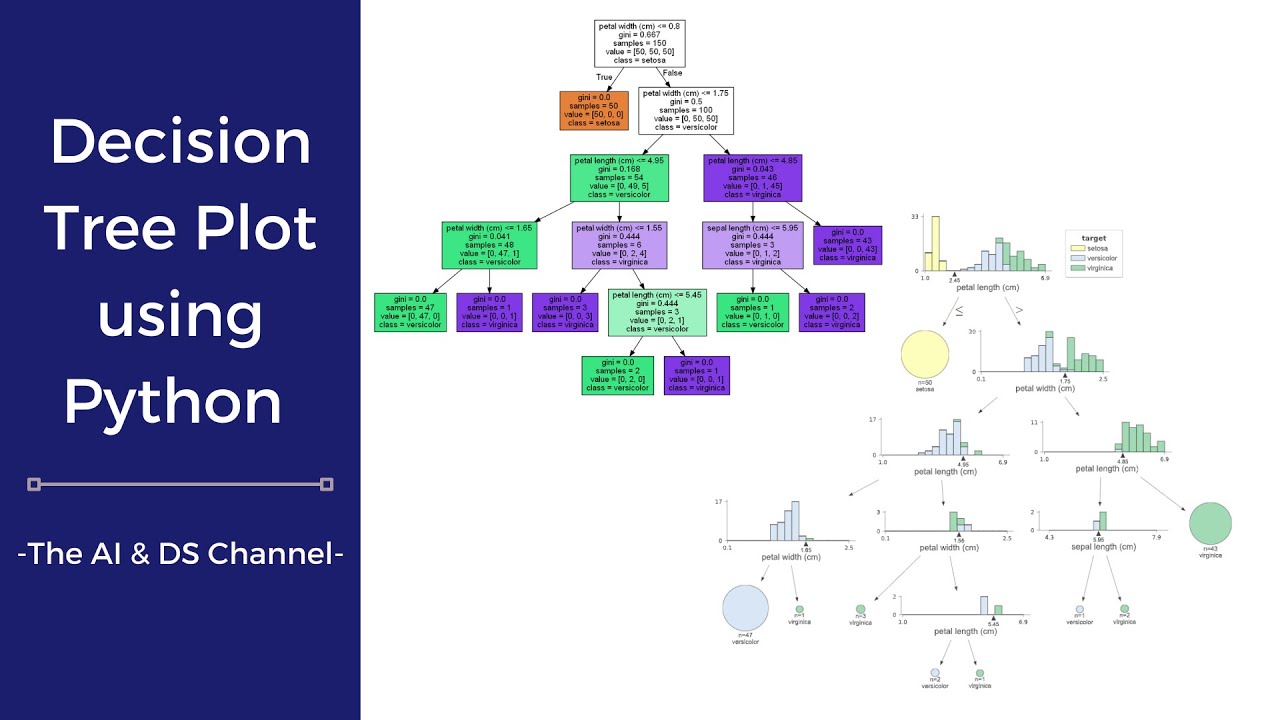

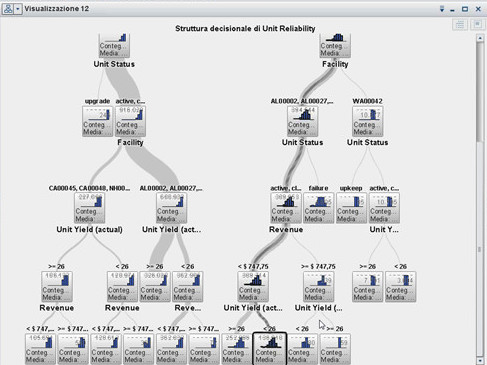

Calculate the total number of instances in the data set.Logs of the probabilities of each possible outcomeĪbove is indicative of how much entropy is remaining prior to doing anyĬalculate the Information Gain for Each AttributeĪttribute in the data set (e.g. With the frequencyĬounts of each unique class, we can calculate the prior entropy of this data Total instances: 9 instances of p and 5 instances of n. That will help us determine if we should play tennis or not.Ĭalculate the Prior Entropy of the Data Set ID3 Exampleįrom the example in the previous section, we want to create a decision tree = -(p/(p+0))log 2(p/(p+0)) + -(0/(p+0))log 2(0/(p+0))Įasiest to explain the full ID3 algorithm using actual numbers, so below I willĭemonstrate how the ID3 algorithm works using an example. don’t play tennis) in the data set, the entropy will be 0. That if there are only instances with class p (i.e. The biggest impact on the entropy of the data set. Weights make sure that classes that appear most frequently in a data set make The weights in the summation are the actual probabilities themselves. This negation ensures that the logarithmic term returns small numbers for high probabilities (low entropy greater certainty) and large numbers for small probabilities (higher entropy less certainty). The negative sign at the beginning of the equation is used to change the negative values returned by the log function to positive values (Kelleher, Namee, & Arcy, 2015). n = number of negative instances (e.g.p = number of positive instances (e.g.

= weighted sum of the logs of the probabilities of each possible Log is used as convention in Shannon’s Information Theory). Instances p (play tennis) and negative instances n (don’t play tennis), theĮntropy contained in a data set is defined mathematically as follows (base 2 To the running example, in a binary classification problem with positive Greater the entropy is, the higher the uncertainty, and the less information Occurring, the entropy (also impurity) of the data set will be high. If there are many differentĬlass types in a data set, and each class type has the same probability of Thus a measure of how heterogeneous a data set is. Instance is a different class, entropy would be high because you have noĬertainty when trying to predict the class of a random instance. However, if there is a data set in which each A data set in which there is only oneĬlass has 0 entropy (high information here because we know with 100% certainty

To think about entropy is how certain we would feel if we were to guess theĬlass of a random training instance. Aĭata set with a lot of disorder or uncertainty does not provide us a lot of Measure of the amount of disorder or uncertainty (units of entropy are bits). How is “most information” determined? It isĭetermined using the idea of entropy reduction which is part of Shannon’s Information gain if we were to split the instances into subsets based on the Time where at each node we select the attribute which provides the most We can then classify fresh test instances based on the rules definedįrom the root of the tree, ID3 builds the decision tree one interior node at a Keep splitting the data into smaller and smaller subsets (recursively) untilĮach subset consists of a single class label (e.g. Objective behind building a decision tree is to use the attribute values to We then split each of those subsets even further based on another Used to create a subset of ‘sunny’ instances, ‘overcast’, and ‘rainy’ For example, the weather outlook attribute can be Split the instances in the data set into subsets (i.e. The columns of the data set are the attributes, where the rightmostĪttribute can take on a set of values. With a data set in which each row of the data set corresponds to a single Get into the details of ID3, let us examine how decision trees work.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed